Potable Water Reuse Advances with New Technologies

Since 2019, Yasuhiro Matsui has led research & development and innovation at Yokogawa Electric Corporation, examining semi-autonomous operation principles for use within potable water reuse facilities, He received his doctorate in urban engineering from The University of Tokyo, and holds a Japanese professional engineering license for Water Supply & Industrial Water Supply.

Andrew Salveson is Carollo’s Water Reuse Chief Technologist. He has been honored with the WateReuse Person of the Year Award and been part of teams honored with the CWEA Research Achievement Award, the WateReuse Association Innovative Project Award, and the International Water Association Market Changing Water Technology Award. He serves on a number of expert panels with NWRI and The WRF, and he has published guidance documents on potable reuse and disinfection for WHO, NWRI, IUVA, and WEF.

Jason Assouline is a project manager at Carollo with a wide range of experience on water reuse and drinking water projects. He is Carollo’s water reuse technical practice design excellence lead and quality manager as well as a regional lead within the Carollo Research Group. Over the past 17 years, Jason’s project work has included Water Research Foundation research projects, pilot plant design and operation, full scale treatment plant design and operation, and construction management.

undefinedA new generation of ecological challenges — climate change, growing populations, and a shortage of natural resources — is increasing the need for water agencies, their constituents, and the entire populace to implement strategies for continued water sufficiency. Reservoirs and groundwater sources relied upon for decades are rapidly depleting and, in some especially-impacted areas, are disappearing entirely.

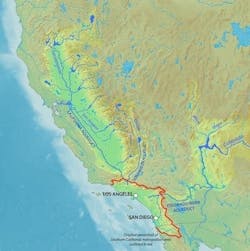

Southern California, in a constant state of drought and with a population over 20 million people, is highly dependent on imported water from Northern California and the Colorado River. However, cyclical droughts impact both of these water supplies, which are further threatened by changing environmental conditions and water overdraw (Figure 1).

California is only one example, as other regions throughout the United States and globally are coming to grips with the reality that supply sources and consumption behaviors must change to sustain availability of water resources for the future.

Moving to Sustainability

Since the early 2000s, regional water boards in select areas have steadily increased production and use of recycled water. The City of Los Angeles’s Green New Deal of April 2019 is a particularly aggressive policy that mandates sourcing 70% of water locally and recycling 150,000-acre feet per year, roughly 10% of total consumption. This and other similar goals can only be reached through the use of advanced water treatment (AWT), often including ultrafiltration (UF), reverse osmosis (RO), and ultraviolet advanced oxidation processes (UV AOP). These technologies are driving current and future prospects for potable water reuse.

There are two main methods for converting wastewater to potable water: indirect potable reuse (IPR) and direct potable reuse (DPR). IPR involves release of treated wastewater into a strategic environmental source — such as a reservoir or aquifer — whereas DPR, like its name implies, involves direct transfer of highly purified wastewater to the local potable distribution system. Each method has benefits and challenges:

- DPR does not require as much piping or pumping as IPR, translating to lower capital and operating costs, along with a reduced carbon footprint.

- IPR has fewer regulatory barriers because it has wider adoption, including organizations throughout California, Texas, Florida, Virginia, and Australia operating with fully permitted IPR systems.

- DPR is growing in popularity, especially in locations where changing environmental conditions of intermediary reservoirs limit how, when, and where IPR is possible.

Despite historically negative public perception of IPR, and DPR to a greater extent, necessity and wider availability of public information is priming potable water reuse for continued growth.

Through these methods, agencies can capture wastewater and return high-quality potable water, reducing the impacts of drought and population growth on water supply. Moreover, technological innovations in treatment, monitoring, and control systems are enabling more efficient and reliable production of potable water.

Adoption Challenges

Full access to IPR and DPR AWT solutions is still not possible for all water districts because of large capital improvement and maintenance costs, and high operational complexity. Additionally, feasibility hurdles stand in the way for many municipalities because the required timely water sampling for DPR is not feasible.

As it stands now, municipalities rely on operational surrogates — such as turbidity or concentration multiplied by disinfection contact time (CT) — to monitor pathogen treatment. This is because it can take weeks or even months to receive lab results for contaminants that are especially difficult to measure. This includes testing for required log10 reduction values of viruses, Giardia, and Cryptosporidium. By the time results arrive, it is too late to determine whether the treated water is fit for potable reuse because the past results are no longer relevant to current processes.

Escaping this paradigm is not easy for water providers because readily-available lab technology cannot detect these pathogens at very low concentrations, such as downstream of AWT equipment. To effectively inform operations, staff require quick and reliable results from pathogen detection devices.

The full AWT process includes primary treatment at a water reclamation facility (WRF) — with microbial anerobic and aerobic decomposition — and settling in a secondary clarifier. The effluent water from the WRF then undergoes AWT, with technologies such as UF, RO, and UV AOP (Figure 2).

By implementing newer analyzer technology to detect microorganisms downstream of AWT processes, staff can be confident as to whether AWT treatment processes are functioning properly.

Potable reuse and efficient AWT are becoming increasingly critical in areas threatened by water shortages. But without reliable pathogen detection at near real time, there is often no choice but to accept log reduction credit allocations assigned by the appropriate regulatory authority, while increasingly stringent requirements mandate required credits for AWT processes.

UF and RO receive zero and minimal credits, respectively, for virus treatment. AWT requires the installation of mechanical multi-barriers to guarantee the credit number as assigned by regulators.

However, overtreatment and operating costs can be reduced if water quality is proven to meet requirements post-UF, or anywhere else throughout the AWT process.

Test Results in Hours Instead of a Month

To address these and other issues, Yokogawa is tapping into medical fluorescence scanning technology for detecting pathogens in extremely low concentrations downstream of filtration systems, such as UF. These devices reduce the time required for test results from a month or more to less than half a day, providing the means for confident and timely decisions regarding the fitness of water for potable reuse in both IPR and DPR systems.

To test water quality, technicians take a grab sample and run it through a rapid assessment pathogen identification (RAPID) high sensitivity fluorescence scanner (Figure 3). This new analytical tool improves the overall confidence in AWT and reduces response retention time at storage of purified recycled water — such as in groundwater aquifers or surface water reservoirs — while cutting overall capital and operating expenditures.

Rapid testing identifies log10 reduction values of viruses, Giardia, and Cryptosporidium, and the device integrates easily with other smart sensors and highly digitized plants. When sample results are fed into a network-connected fluorescence scanner, components at the industrial edge can be utilized for off-premises communication.

This enables advanced data analysis through cloud software and secure remote connectivity for making near real-time operational adjustments. Additionally, these specialized scanners are equipped with built-in data cleansing, curation, analysis, and modeling tools for continuous process optimization.

Gateway to Potable Reuse

Rapid fluorescence scanning provides promising results for increasing the feasible implementation of IPR and DPR, without the financial and operational burdens of excess treatment equipment. Additionally, it increases confidence in the treatment methods employed in potable reuse systems, improving the public’s perception of water safety over time.

Although detecting pathogens at very low concentrations — such as downstream of UF — remains a challenge, scanning technologies are improving continuously to increase the speed and accuracy of analysis with each passing year. Following up considerable advances in treatment technology and filtration media, digital methods for analysis are proving the widespread feasibility of AWT for municipalities and water districts worldwide (see Figure 4 at top of article).

These pathogen detection technologies are critical for utilities to implement wastewater treatment for potable reuse in a cost-effective manner. As part of digital transformation at multiple organizations, the impact assessment of this new technology in real-time operations is being actively implemented and monitored. The prospects for sustainable water yield appear hopeful as artificial intelligence and advanced analytics play a critical role for increased reuse of our most critical natural resource.